The 2026 Existential Mandate: Why Legacy is a Boardroom Crisis

We are operating in an industry that has fundamentally misunderstood the physical weight of its own history. Right now, up to 220 billion lines of COBOL remain in active production environments globally [11]. That aging code is not sitting idle in forgotten archives. It actively underpins approximately 85% of all Fortune 500 financial transactions today [12]. If you have ever been paged at 2am because a twenty-year-old batch process failed silently and halted a massive payment reconciliation pipeline, you already know the reality that vendor brochures gloss over. By 2026, legacy system modernization has officially transitioned from a localized engineering headache into an existential boardroom crisis. The preservation of monolithic architectures is now mathematically and operationally unsustainable for any business attempting to scale.

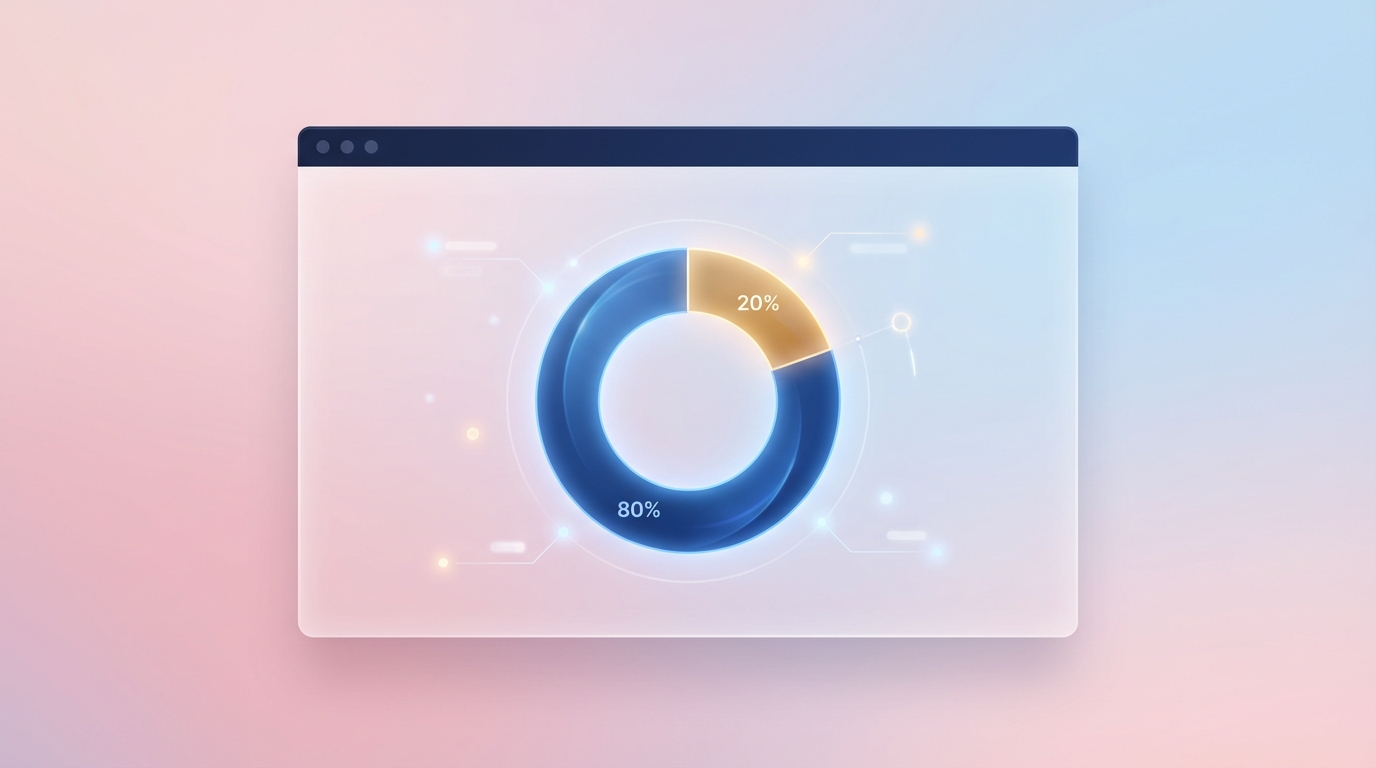

The Macroeconomics of the 80/20 Budget Trap

We have to look at the telemetry to understand how we got here. The financial consequence of this massive installed base of aging infrastructure is what industry analysts coldly term the "80/20 Budget Trap." Rigorous audits, most notably from the U.S. Government Accountability Office (GAO), consistently demonstrate a bleak reality. Organizations are reliably forced to allocate between 60% and 80% of their total technology budgets purely to Operations and Maintenance [7, 8]. In the federal sphere alone, this equates to roughly $83 billion out of a $100 billion planned budget being diverted away from new capabilities just to keep the lights on [7].

Source: GAO / Gartner / Altimi (2023-2026)

This is not a uniquely public sector problem. In the private sector, engineering teams are being starved of the capital required to build competitive features because that capital is locked up in sustaining stagnation. When your operational budget is entirely consumed by patching monolithic databases, updating obsolete middleware, and keeping deprecated servers breathing, there is no runway left for actual innovation. You are essentially paying a massive tax just to stand still.

The Compounding Interest of Structural Technical Debt

The situation becomes vastly more dangerous when you factor in the financial mechanics of deferred maintenance. Technical debt does not sit quietly on a balance sheet. It functions as a compounding financial liability. Industry data indicates that technical debt interest rates accrue at a staggering 15% to 25% annually [24]. There is some debate in the community on the velocity of this compounding. Firms like Software Modernization Services argue that this compounding happens quarterly [23], while organizations like Forrester and Profound Logic report that the 15% to 25% rate compounds annually [24].

Regardless of the specific interval, the pragmatic outcome is the same. A $5 million legacy system modernization project that leadership decides to delay for three years will see its ultimate execution cost inflate by over 50%. This happens because the surrounding ecosystem keeps moving forward. APIs change, security standards evolve, and the architectural entropy of your unaddressed monolith deepens. Deferring modernization is not a cost-saving measure. It is a high-interest loan taken out against the future stability of your engineering organization.

The Rapidly Evaporating Legacy Talent Pool

To compound the financial crisis, we are facing a severe demographic cliff that threatens our ability to even maintain these legacy systems. The labor pool required to sustain the status quo is physically evaporating. In 2024, fewer than 2,000 new COBOL developers graduated globally [13]. At the same time, the average age of a mainframe programmer continues to climb well past the standard retirement age. Telemetry from 2025 indicates that 72% of experienced mainframe engineers are slated to retire by the year 2030 [12].

When those senior engineers leave, they take decades of undocumented, tribal knowledge with them. They are the only people who know why a specific job control script must run at exactly 1:15 AM to prevent a race condition in the core database. Once they exit the building, the organization is left flying completely blind. Replacing them is nearly impossible because modern engineering graduates have zero interest in maintaining monolithic architecture from the 1990s. We are rapidly approaching an event horizon where we will simply not have the human capital required to keep these legacy systems operational, making immediate modernization the only defensible path forward.

Quantifying the Drag: Telemetry on the True Cost of Inaction

The true cost of technical debt is often obscured by terrible reporting metrics. Business stakeholders tend to measure engineering velocity strictly by the delivery of new features, remaining completely blind to the friction hiding beneath the surface. However, when we deploy rigorous telemetry to analyze what developers are actually doing hour by hour, the data paints a terrifying picture of organizational drag.

The $1.52 Trillion Software Liability

We must first look at the raw macroeconomic numbers to understand the scale of the liability. Industry models suggest that the global estimated cost of application maintenance has eclipsed $1.68 trillion annually [1]. In the United States alone, the accumulated software technical debt reached an estimated $1.52 trillion by 2025 [10].

This financial burden is heavily driven by the massive footprint of end-of-life frontend and backend frameworks that refuse to die. We are not just talking about mainframe COBOL. Millions of enterprise applications continue to rely on legacy .NET architectures, pre-8.0 PHP monoliths, and deprecated JavaScript frameworks like AngularJS [1]. Running an enterprise on an application built with a framework that has been out of official support for years is an act of extreme negligence, yet it happens every single day due to the sheer cost of refactoring.

Developer Attrition and the 'Innovation Drag'

The financial cost is staggering, but the human cost is what actually breaks an engineering department. According to large-scale developer surveys from Stack Overflow and JetBrains in 2024 and 2025, technical debt is now the undisputed dominant workplace frustration globally [3, 19]. It is not a lack of perks or compensation that drives engineers crazy. It is the brittleness of the code they are forced to work with.

Rigorous telemetry indicates that engineers waste approximately 33% of their active working time navigating, debugging, and maintaining fragile legacy code [5, 6].

Source: Stripe / JetBrains (2025)

Let that number sink in. That is equivalent to losing two to five full working days every single month per developer just to wrestle with spaghetti code. When an engineer has to spend three days tracing a single variable through twelve undocumented legacy classes just to safely add a button to a user interface, you have a systemic operational failure. This dynamic creates a massive "innovation drag." It destroys team morale and directly accelerates attrition. Data shows that engineers are 2.5x more likely to leave organizations heavily burdened by technical debt [6]. You simply cannot retain top-tier engineering talent if you force them to spend a third of their lives shoveling coal into a broken engine.

Security Vulnerabilities in End-of-Life Frameworks

Beyond the productivity sink, legacy systems represent a massive, unquantifiable security risk. When frameworks reach their end-of-life status, they no longer receive official security patches. This means that when a new vulnerability is discovered, there is no cavalry coming to provide a fix.

The average time from vulnerability disclosure to active exploitation in unsupported web frameworks dropped to under four days in recent years [1]. For example, applications still running AngularJS carry over 35 known unpatched high and medium severity vulnerabilities [1]. Every day that a legacy frontend remains in production, the organization is effectively leaving the front door unlocked. Furthermore, these aging stacks consistently fail to meet modern web performance standards, such as Google's Core Web Vitals, which actively damages search visibility and user retention. The cost of inaction is no longer just a slow deployment cycle. It is the active acceptance of a critical data breach waiting to happen.

The AI Productivity Paradox in Brownfield Environments

When generative AI and Large Language Models hit the mainstream engineering consciousness, every executive immediately assumed we finally had a silver bullet. The vendor pitch was intoxicating. You simply point an AI at your monolithic legacy codebase, press a button, and watch as it seamlessly refactors decades of technical debt into pristine microservices. This narrative was largely fueled by early metrics showing massive speed advantages in isolated, greenfield environments. However, hard telemetry from 2025 and 2026 reveals a deeply problematic reality that researchers call the AI Productivity Paradox.

The Context Window Performance Cliff

To understand why AI struggles so profoundly with legacy code, you must understand the architecture of the tools themselves. AI models operate within strict context windows. They can only "see" a limited amount of code at any given moment. In a greenfield project, where a developer is building a new application from scratch, this is perfectly fine. The AI can generate standalone functions or boilerplate infrastructure with remarkable accuracy, yielding documented net productivity gains of 30% to 40% [3, 4].

However, legacy modernization is a brownfield exercise. You are dealing with highly coupled, deeply entangled logic spread across millions of lines of code. Stanford University's large-scale telemetry study identified a severe "Context Window Performance Cliff." They noted that AI effectiveness drops sharply from 90% down to 50% as codebase complexity and historical shadow logic increase [3]. An AI cannot accurately refactor a module if it cannot see the undocumented dependency hiding three directories away. It will confidently generate code that looks syntactically correct but fundamentally breaks the historical business constraints of the system.

Subjective Speed vs. Objective Velocity: The 19% Slower Reality

The most damning evidence against unconstrained AI in legacy environments comes from the METR Randomized Controlled Trial published in 2025. This was the gold standard of productivity research. They took highly experienced developers, placed them in massive, complex brownfield repositories, and measured their performance with and without frontier AI tools [2, 17].

The results were a complete shock to the industry. Developers using AI tools actually took 19% longer to complete complex legacy tasks compared to a manual control group [2, 18].

Source: METR Randomized Controlled Trial (2025/2026)

What makes this paradox so dangerous is the psychological component. There is a massive gap between perception and reality. Prior to the study, both experts and developers predicted AI would yield a 24% to 39% speedup [17]. Even more alarmingly, after completing the tasks and objectively recording slower times, the AI-assisted developers still self-reported feeling 20% faster [1, 11].

This subjective gap obscures the immense cognitive overhead of using AI in tightly coupled architectures. Developers feel fast because the AI types out 50 lines of code instantly. But they spend the next three hours painstakingly debugging the subtle, hallucinated errors the AI introduced into the legacy database schema. Prompting, verifying, and correcting an LLM inside a fragile monolith takes significantly more mental energy than just writing the correct code manually.

Unconstrained Generation and the Spike in Structural Debt

When you deploy AI without strict architectural guardrails, you do not get modernization. You get accelerated entropy. Because AI tools are optimized to generate code quickly, they often default to mimicking the bad patterns that already exist in the legacy repository.

Extensive analysis of over 200 million lines of changed code indicates that since the widespread adoption of AI, true architectural refactoring has collapsed. Instead, copy-pasted "clone" code has surged drastically [7, 8]. AI integration in legacy systems has triggered a massive 98% surge in Pull Request volumes [20]. Because developers are generating more code than they can accurately comprehend, the burden shifts entirely to the review phase. Consequently, organizations are seeing a 91% increase in PR review times [20]. We are essentially using AI to generate technical debt at machine speed, suffocating our senior engineers in a mountain of unverified, probabilistically generated spaghetti code.

The Bifurcated Tooling Landscape: Generalists vs. Deterministic Comprehension

Because of the severe limitations of basic generative AI in brownfield environments, the tooling landscape in 2026 has violently bifurcated. Engineering leaders must now understand the fundamental difference between probabilistic code generation and deterministic codebase comprehension. If you use the wrong category of tool for a legacy modernization project, you will inevitably trigger a catastrophic architectural drift.

Why General-Purpose LLMs Fail Tightly Coupled Monoliths

General-purpose AI tools, such as GitHub Copilot or standard chat-based interfaces, are fundamentally probabilistic engines. They operate by predicting the next most likely sequence of tokens based on their training data and the immediate local context window. They are exceptionally good at autocompleting a standard React component or generating a regex string.

However, they are wholly inadequate for reverse-engineering a tightly coupled, fifteen-year-old monolith. General-purpose tools probabilistically generate code that syntactically mimics legacy logic, but they fail completely on historical constraints [11, 12]. If your legacy system relies on an undocumented, asynchronous batch process that updates a specific database column every night, a standard LLM will not know that constraint exists unless it is explicitly documented in the local file it is reading. It will confidently generate a new microservice that completely ignores the batch process, resulting in silent data corruption in production.

Source: Industry Codebase Telemetry Analysis (2026)

This is why we see the massive spike in review times. You cannot trust probabilistic generation when the stakes involve core business data. You need deterministic guarantees.

The Role of Deterministic ASTs and Graph Databases

To safely modernize a legacy system, you must first comprehend it with absolute, mathematical certainty. This is where specialized comprehension platforms like Thoughtworks CodeConcise or IBM Mono2Micro become absolutely mandatory [5, 6].

These specialized tools do not just read code as raw text. Instead, they parse the entire legacy repository into deterministic Abstract Syntax Trees (ASTs). An AST is a highly structured, hierarchical representation of the source code that maps exactly how every function, variable, and class interacts. Once the code is parsed into an AST, these platforms often load the structural data into graph databases.

By querying the graph database, an engineering team can instantly trace the true execution path of any legacy feature. You can see exactly which modules interact with the specific database table you want to isolate. This is not probabilistic guessing. It is an exact, deterministic map of your actual architectural reality.

Safely Traversing Dependencies Before Code Generation

You should never write a single line of modernized code until you have completely mapped the dependencies of the legacy component you are trying to replace.

The specialized AST-driven platforms allow AI to safely traverse these complex dependency graphs before any code generation occurs. Once the boundaries of a specific legacy domain are mathematically proven, you can then safely use generative AI to translate that isolated logic into a modern language or framework. The AI is no longer guessing context. It is operating within a strictly bounded, deterministically verified sandbox.

This bifurcated approach solves the context window problem. You use ASTs and graph databases to map the monolith and draw the architectural boundaries. Only then do you deploy the probabilistic LLMs to translate the localized logic inside those boundaries. Attempting to skip the comprehension phase and jumping straight to generation is the root cause of almost every failed AI modernization initiative.

Execution Architecture: Defensible Phased Migration Over Big-Bang Rewrites

Having established the macro-economic urgency of modernization and the critical limitations of AI tooling, we must finally address the actual execution strategy. The architectural decision of how to physically transition away from the legacy system is where most CTOs either secure their organization's future or destroy their own careers. Historically, the industry has engaged in a bitter debate between the "big-bang" full system rewrite and the phased, incremental migration. In 2026, the empirical data leaves virtually no room for debate.

Deconstructing the Big-Bang Failure Rate

The big-bang approach requires halting core product innovation to rebuild the entire legacy system from scratch, culminating in a single, massive cutover event. The industry discourse frequently cites a failure rate of 70% to 80% for these full system rewrites. We must be precise with our data here, as this statistic is often misunderstood.

A deep deconstruction of foundational data from the Standish Group's CHAOS report reveals that outright cancellations of large monolithic IT projects hover around 20% to 30% [15].

However, when you combine the completely failed projects with the heavily "challenged" projects (those that finish massively over budget, severely delayed, or missing critical functionality), the failure rate indeed approaches that terrifying 80% threshold [10, 15].

Big-bang rewrites are fundamentally flawed because they demand multi-year feature freezes. You are asking the business to stop competing in the market for three years while engineering attempts to hit a moving target. By the time the new system is ready for the cutover event, the business requirements have completely changed. Furthermore, the risk of a catastrophic data migration failure during the single cutover window is exceptionally high. Unless you have virtually unlimited capital and the ability to freeze market innovation indefinitely, the big-bang approach is an unacceptable operational risk.

The Mechanics of the Strangler Fig Pattern in 2026

The empirical consensus among senior architects heavily favors phased migrations, structurally typified by the Strangler Fig pattern. Coined by Martin Fowler, this methodology utilizes proxy routing layers to incrementally replace legacy endpoints one by one.

In practice, you stand up a new API gateway or proxy layer in front of the legacy monolith. When a user requests a specific feature, the proxy routes the traffic to the old system. You then isolate a single domain boundary within the monolith, use AST tooling to map its dependencies, and rewrite that specific module as a modern microservice. You then update the proxy to route traffic for that specific feature to the new microservice. The legacy system remains completely operational the entire time.

This approach provides continuous, verifiable business value. You are not waiting three years to see a return on investment. Case studies extensively validate this method. For example, ING Bank successfully transitioned 1.5 million lines of COBOL to Java using incremental refactoring [6]. Similarly, engineering teams at Carta utilized a zero-downtime phased approach to completely refactor their complex HR integration architecture [7]. While phased migrations are inherently slower on a calendar timeline, they dramatically limit operational risk by allowing engineering teams to validate assumptions in production every single week.

Establishing CI/CD Guardrails for AI-Assisted Migration

To execute a Strangler Fig migration successfully in the era of AI, engineering leadership must implement draconian CI/CD guardrails. Because we know that AI can generate syntactically correct but logically flawed code at extreme velocity, your automated testing pipeline must become the ultimate gatekeeper.

You cannot rely on manual pull request reviews to catch AI hallucinations inside complex legacy logic. The pipeline must enforce strict automated CI/CD validation before any AI-assisted code merges into the mainline. This includes automated unit testing, integration testing against the legacy database constraints, and deterministic architecture scanning to prevent the new microservice from accidentally coupling back into the legacy monolith.

Modernization is no longer simply an exercise in writing new code. It is an exercise in managing complex systemic transitions with absolute rigor, patience, and telemetry-driven execution. You must reject the vendor promises of instant AI rewrites, avoid the massive risk of big-bang cutovers, and commit to the disciplined, incremental strangulation of your technical debt.

If your organization is trapped allocating 80% of its budget to legacy maintenance and you need a defensible, risk-managed path out, Altimi's Modernization Discovery Sprint delivers a concrete execution plan in 2-4 weeks. We provide a deterministic architecture assessment, a strict 90-day phased execution roadmap, and a transparent business case mapping your CAPEX/OPEX projections for €8,500. Stop guessing at the scale of your technical debt and Book a Modernization Assessment today to secure your engineering future.